(Note: This piece was written in November 2009 as “notes to myself” when I decided to write up a story based on NGDP targeting and see if it fitted the “facts”. I believe the illustrations help in understanding the story.)

It is divided in two parts because its size did not allow sending out just 1 e-mail

Rarely a day goes by without a new analysis on the “cause” of the crisis. A lot of the discussion on this topic is centered on the importance of the role that the elements on the “most wanted” list of suspects played. Those include:

The excess savings in emerging markets (savings glut),

Expansionary monetary and fiscal policies in the advanced economies,

The securitization of finance,

The underestimation of aggregate risks,

The fall in loan underwriting standards,

The errors made by rating agencies,

Regulatory failure,

The aggressive tactics behind mortgage loans,

The public policies related to homeownership.

Most likely, all the “suspects” share some degree of guilt. However, this is not of much help if the goal of the “finger pointing game” is to provide lessons for the future. For example, if the public policies related to the mortgage market and home ownership had not been adopted, maybe the aggressive mortgage loan tactics, the fall in loan standards, the degree of securitization and even the underestimation of risks would have evolved differently.

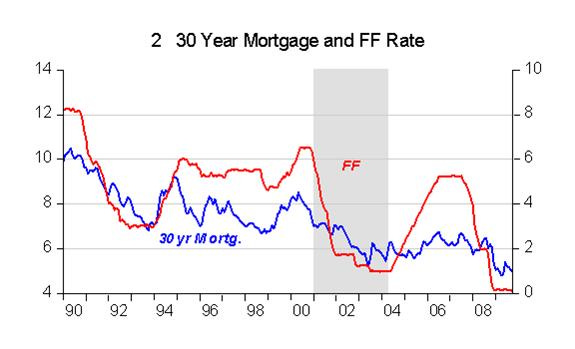

From another angle, and this enticed a defense by Bernanke in the recent AEA meetings in Atlanta, many analysts attach a high weight to monetary policy “errors” that would have occurred between 2002 and 2004, when “interest rates were kept too low for too long”. This conclusion follows from examining the Taylor Rule for calibrating the FF rate by the Fed illustrated in figure 1.

Since the FF (Fed Funds) rate remained significantly below the rate required by the “Rule” (FF-TR), monetary policy is interpreted as being “too easy”, contributing to the increase in the demand for mortgage loans and the consequent unsustainable (“bubble”) rise in house prices.

Apparently, as shown in figure 2, this view has merit since there appears to be a close correlation between the FF rate and the 30 year mortgage rate. During 2002 – 2004 the fall and subsequent stability at the 1% level of the FF rate “pulled” the mortgage rate downwards, a fact that may have contributed to the increase in house demand (which was already being driven by the public policies – homeownership incentives – adopted for the sector).

As figure 3 indicates, the “contribution” of the “easy” monetary policy to the “bubble” in house prices may not have been as significant as envisaged by many. Looking again at figure 2 we observe that the mortgage rate remains low even after the FF rate begins to rise in mid 2004. On the other hand, we note from figure 3 that house prices had been rising for some years prior to the rate reduction and continued to climb after the FF rate began to rise, with the peak in prices being reached two years later in mid 2006.

This fact (FF rates increasing and long rates stable) was codified by Greenspan as a conundrum. One “solution” to the conundrum that became famous was provided by Bernanke in 2005, going by the name of savings glut (meaning the “excess” of savings in emerging markets whose positive current account balance – reserve accumulation – was reinvested in US Treasury Bills), in practice blocking one of the transmission channels of MP.

If figures 1 and 2 constitute evidence in favor of those that point to failures in monetary policy for the development of the “bubble” in house prices, the bursting of which is a root cause of the crisis, figure 3 throws some doubts on this narrative since the behavior of house prices doesn´t change during the period of “low” rates.

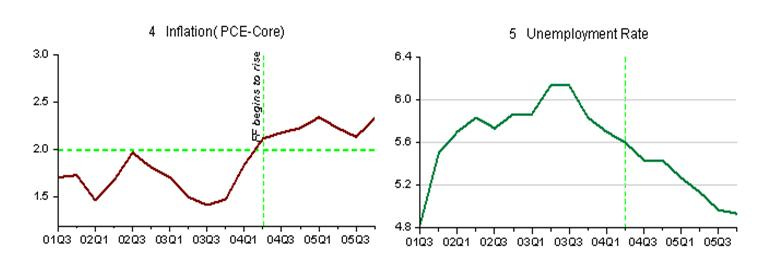

Critics accuse Greenspan of being a “bubble blower”. Since this was certainly not his explicit objective, what motivated the Fed to adopt an “easy” monetary policy in the first half of the last decade? To understand the FOMC´s actions we have to appeal to the Fed´s dual mandate: “Price stability (interpreted as a “low” inflation (2%)) and maximum employment”.

Figures 4 and 5, illustrate what the Fed was “seeing” at that moment. Between 2001 and 2003, following the 2001 recession, inflation was below its “target” and falling while the rate of unemployment was in a clear rising trend. In November 2002 Bernanke, at the time a Fed governor, delivered his famous speech: “Deflation: Making sure “it” doesn´t happen here”.

A passage in the speech clearly indicates what was going on in the minds of those responsible for monetary policy: “That this concern is not purely hypothetical is brought home to us whenever we read newspaper reports about Japan, where what seems to be a relatively moderate deflation–a decline in consumer prices of about 1 percent per year–has been associated with years of painfully slow growth, rising joblessness, and apparently intractable financial problems in the banking and corporate sectors”.

Note on the figures that FF only began to rise after inflation reached the “target” and unemployment was on clear down trend.

Figures 4 and 5 sort of exculpate Greenspan and the Fed since the policy implemented was the correct one given the Fed´s dual mandate.

These two opposing views – one based on Taylor Rules, that indicates that monetary policy was “easy”; the other, given the Fed´s mandates, indicates that policy was correct – lead me to think, especially in light of the crisis that manifested itself further down the road, if the objective (inflation target, even if only implicit in the case of the US) and the instrument (FF rate) of monetary policy are robust.

There are calculations of the Taylor Rule indicating that the FF rate should now be -5%. Having reached its zero lower bound, however, the FF rate cannot be manipulated any longer. There remains the perception that at the time it is most needed, monetary policy becomes powerless with “fiscal stimulus” remaining the only alternative to get the economy back on its feet.

On the other hand, the use of an interest rate as the instrument of policy creates confusion regarding the stance of policy. “Low” rates are confused with an “easy” monetary policy (MP) and “high” rates with a tight MP stance. Japan, for example, has kept its “FF” rate close to zero for many years, but Japanese MP cannot be called “easy”. Between April and September 2008 the FF rate was kept at 2%. As experience has shown, far from indicating that MP was “easy”, this “low” rate reflected a “tight” MP stance!

The Inflation Targeting (IT) regime also gives rise to some problems. By definition the IT regime requires that MP be symmetric. If inflation is below target MP has to be manipulated to bring inflation back on target. Conversely, MP has to constrain inflation when it is above the target.

However, while we may think of inflation above target as being “bad”, a fall in inflation below target (or even some deflation) is not necessarily something “malign”. The problem, as perceived from the Bernanke quote above, is that even a moderate deflation can be damaging. This happens due to the fact that he, as well as many others, has in mind the events surrounding the Great Depression when MP errors resulted in deflation and a steep fall in real output (RGDP).

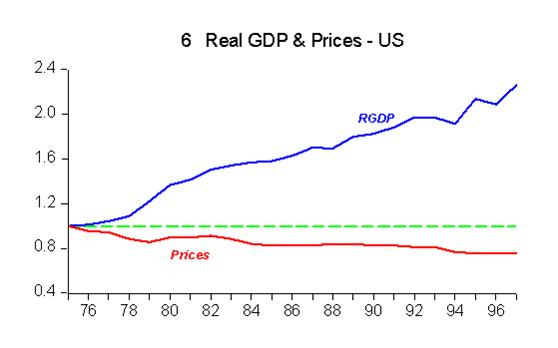

That deflation is not necessarily harmful is illustrated in figure 6 which shows what was happening in the US economy in the last quarter of the 19th century.

While RGDP increased at an average rate of 3.8%, prices fell at 1.3% on average. Note that this is something quite different from the Japanese experience over the last 20 years.

Continued in Part 2